The AI-mpersonation is complete.

The dystopian lessons in every sci-fi movie from “Terminator” to “Ex Machina” appear to be coming true. Artificial intelligence has become so sophisticated that bots are no longer discernable from their human counterparts, per a concerning preprint study conducted by scientists at the University of California in San Diego.

“People were no better than chance at distinguishing humans from GPT-4.5 and LLaMa (a multi-lingual language model released by Meta AI),” concluded head author Cameron Jones, a researcher at UC San Diego’s Language and Cognition Lab, in an X post.

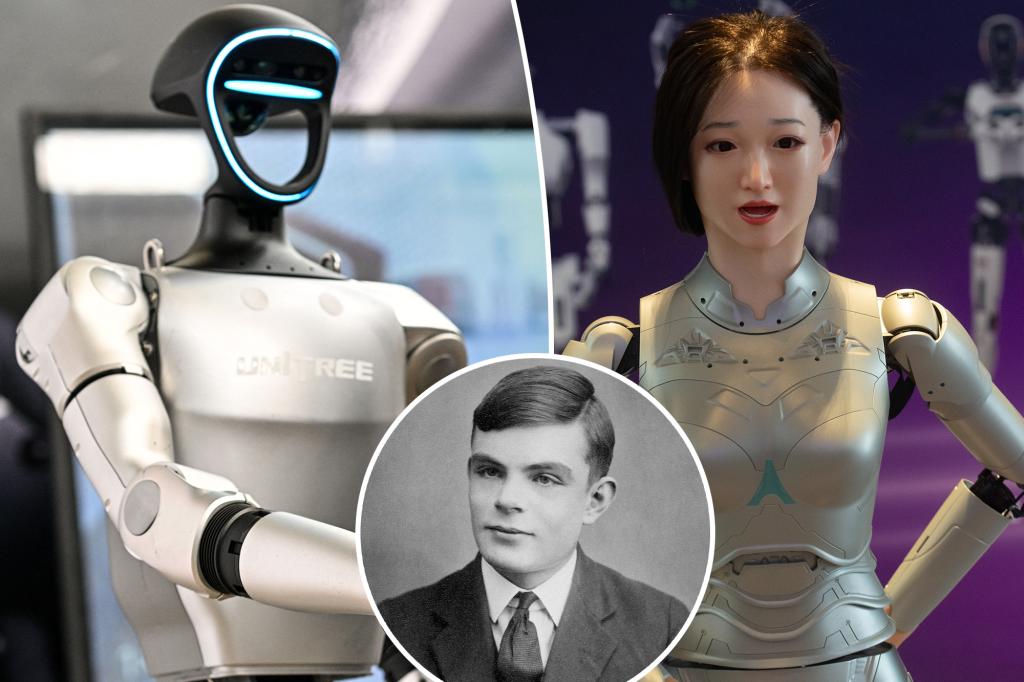

The researchers set out to see if AI bots, which are programmed to parrot human speech patterns, could pass the iconic Turing Test.

Developed by British WWII code breaker and computer scientist Alan Turning, this tech-istential exam gauges the intelligence of machines by determining if their digital discourse could be differentiated from that of a human — if they can’t tell the difference, the machine has passed.

Researchers tested four large language models (LLMs) — GPT-4o, LLaMa-3, and GPT-4.5 and Eliza (a 1960s era chat program) — in two randomized and controlled trials, Mashable reported.

To administer said Turing Test, they enlisted 126 undergraduate students from the University of California San Diego and 158 people from online data pool Prolific, the Daily Mail reported.

These participants were instructed to have five-minute simultaneous online exchanges with a robot and a human to see if they could spot the Decepticon — with the kicker being that they didn’t know which was which.

Meanwhile, the human and AI respondents were tasked with convincing their interrogator that they were human.

Researchers found that, when “prompted to adopt a humanlike persona, GPT-4.5 was judged to be the human 73% of the time.”

This was “significantly more often than interrogators selected the real human participant,” and of course, more than enough to pass the test, per the study.

Meanwhile, “LLaMa-3.1, with the same prompt, was judged to be the human 56% of the time.” While not significantly less than its human brethren, this still achieved a passing grade.

Finally, the baseline models (ELIZA and GPT-4o) failed after deceiving the witnesses just 23% and 21% of the time respectively.

Researchers found that it was paramount to have the synthetic mimics adopt a human persona because, when administered a second test sans said prompt, they performed significantly worse.

Caveats aside, these “results constitute the first empirical evidence that any artificial system passes a standard three-party Turing test,” researchers concluded.

Does this mean that AI-infused learning language models are intelligent?

“I think that’s a very complicated question that’s hard to address in a paper (or a tweet),” said Jones on X. “But broadly I think this should be evaluated as one among many other pieces of evidence for the kind of intelligence LLMs display.”

Interestingly, the experts at Psychology Today concluded that the bots had beaten the Turing Test, not through smarts, but by being a “better” human than the actual humans.

“While the Turing Test was supposed to measure machine intelligence, it has inadvertently revealed something far more unsettling: our growing vulnerability to emotional mimicry,” wrote John Nosta, founder of the innovation think tank Nosta Lab, while describing this man-squerade. “This wasn’t a failure of AI detection. It was a triumph of artificial empathy.”

Nosta based his analysis on the fact that participants rarely asked logical questions, instead prioritizing “emotional tone, slang, and flow,” and basing their selections on which “one had more of a human vibe.”

He concluded, “In other words, this wasn’t a Turing Test. It was a social chemistry test—Match.GPT—not a measure of intelligence, but of emotional fluency. And the AI aced it.”

This isn’t the first time AI has demonstrated an uncanny ability to pull the wool over our eyes.

In 2023, OpenAI’s GPT-4 tricked a human into thinking it was blind to cheat the online CAPTCHA test that determines if users are human.